You flick the switch. A light comes on. You flick it again. The light goes off.

Flick. On.

Flick. Off.

Flick. On. And so on.

So how certain are you that flicking the switch caused the light to come on?

It's not a trick question, but you can take a pause to think if needed.

I suspect you are absolutely certain flicking the switch caused the light to come on. So am I. Let's explore why.

The first piece of information we have is an observed correlation (used colloquially) between two sets of measurements: the position of the switch, and whether the light is on or off. Now, if you've ever spent time around people who want to sound smarter than they are, you will have certainly heard the phrase, correlation is not causation (to be clear, I've said this too). And yet here we are with a correlation that we are certain is causal. So what separates this one from the others? How can we be so certain this correlation reflects a cause? You might pause again and jot down any thoughts you have.

Here's my list of things that materially contribute to our certainty.

The cause is a clearly defined, manipulable action. It is something we are doing. The effect is also clearly defined, and both are measured without error.

The effect is instantaneous. It is also consistent. We can repeat the "experiment" of flicking the switch and the result will be the same every time.

Our observations also fall in line with a lifetime worth of switch flicking.

And, perhaps most importantly of all, we already "know" the mechanism. If you put a hole in the wall, you know you'll find wires. If the light doesn't come on, you know to check the bulb. We have hundreds of years of theory refined through observation and experimentation, eventually leading to the invention of the now ubiquitous light bulb. We know the switch caused the light to come on because “we” built it! It's that incredible sum of human effort and knowledge that truly justifies your certainty.

Unfortunately the causal questions we tend to be interested in medicine and public health aren't nearly so cut and dry. Patients and population's aren't light switches to be flicked on and off at will. Our interventions aren't nearly so crisply defined, even the ones that come in a pill. Outcomes are noisy, rarely instantaneous, and used inconsistently. Observations are precious, and not often generalizable across contexts. And we are deploying interventions into chemical, biological and social systems that are impossibly complex.

So should we throw our hands up and accept defeat? Should we end our pursuit of causality? Of course not. While we will never be as certain as we are about the light switch, many important causal questions in medicine and public health can be usefully pursued with the tools available to us. But our pursuit of causality will be hard. It'll take brains, skills, and preparation. And it will take investment of other people's money, including the public's, so it is especially important to do our best - or at least get the hell out of the way of those who are trying. There are unfortunately too many of us that don't.

The Clueless

This one is pretty obvious, so I'll be brief. The fact is, an awful lot of scientists working in medicine and health have only a rudimentary understanding of measurement, study design, statistical inference and decision-making. Given the other responsibilities clinician-researchers carry, these deficits are somewhat understandable, though the lack of complimentary expert supports is not. But these deficits are also paradoxically rampant among pre-clinical and population-based researchers whose main job is to design, conduct, analyze and report scientific studies. Examples are trivially easy to find in medicine and health, and the overall body of evidence for these deficits is overwhelming. The Clueless has been written about at length, by many, over decades, and nothing is likely to change until we tackle the misplaced incentives that reward money-spent and papers-published over meaningful advancements in medicine and public health. The Clueless is, ultimately, a hulking noise-machine in service of managerial waste, and probably makes you as…irritable…as it makes me (unless of course you are an ignorant or cynical cog in said machine). And yet, there is worse.

The Hopeless

The Hopeless is what you get when The Clueless are so ignorant (or self-interested) they focus on questions that can't actually be scientifically addressed with the methods currently available to them, even if they did know how to properly use them. When the futility of their efforts is pointed out, The Hopeless often point to the importance of the topic their research relates to. BUT WHAT ABOUT THE CHILDREN DARREN!? But sadly, as useful as science is, it simply can't satisfactorily answer every question, even really important ones.

I was lucky to get an early introduction to The Hopeless. I started my research career as a PhD student studying the impact of perinatal malnutrition on lifelong health. I took a few classes on nutritional epidemiology along the way, and basically learned just how impossible it is to measure what people typically eat. Then we read all these papers about these nurses from the Victorian age and how the amount of porridge and straw they reported eating from 12 to 27.5 years of age was related to their heart-cheese levels at 50. The public health nutrition literature is full of this stuff. In a nutshell, large swaths of population based nutrition research (but far from all of it!) is hopeless. Measurement is too poor. Confounding is too rampant. Samples are too selected.

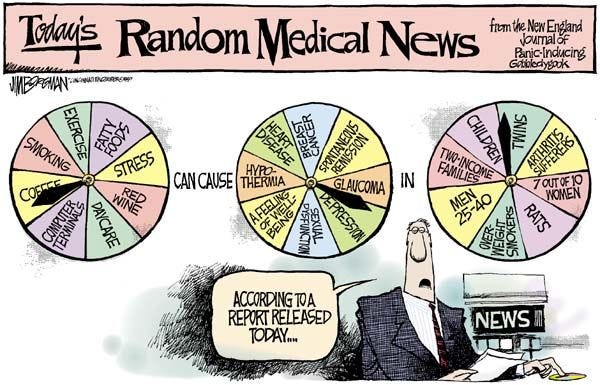

My evidence for this is the endless waves of contradictory articles on nutrition and health that can be found in every major newspaper. My evidence is in the endlessly rehashed debates about which subtle differences in perfectly reasonable diets will extend your life or help you lose weight. And it is found in the many pseudo-scientific grifters who are drawn to lifestyle and diet like moths to the flame, simply because it's about as far from the light switch as you can get. And since there is no empirical common ground to be found, it's more like religion than science. You say the gods want this. I'm doubtful. You point to some words on a parchment. I'm still doubtful. We get nowhere. You say the fuzzy-walnut diet is better than the roasted bird-claw diet for self-reported wellbeing in 30 years time. I disagree. You point to some words in an article in MDPI Nutrients. I disagree. We get nowhere. It's the same thing. Hopeless.

The Hubris

As infuriating as The Hopeless can be, it's not as bad as watching medical and public health scientists actually refuse to answer an important causal question because they think they already know the answer. This is The Hubris. My “favorite” example of this was during the early days of the pandemic, when the utility of masking to protect individuals from COVID-19 and to reduce the spread of SARS-CoV-2 in the community came into sharp focus. A simplified timeline of events, as I remember them:

Early 2020: Don’t use higher quality masks because we need to preserve them for health care workers and we aren’t very confident they are useful as broad public health measures to prevent spread of respiratory viruses in the community. As a non-expert, this all seemed reasonable to me. Life marched on.

March 2020: Life comes to a halt. I am wiping down grocery bags and wondering which of my neighbors I would eat first. Wuhan. Bergamo. New York. Everybody is trying to find solutions for slowing the virus. Some of them are explaining that that virus spreads by clinging onto giant droplets so big that strapping a sock to your face could both protect you, and stop you from spreading the virus to others. This will spare high quality masks for health care workers, while protecting the rest of us from COVID. Ok, fine.

Some people question the efficacy of face-socks, but we are reassured by face-sock advocates that whatever we do we should not run any rigorous studies of the usefulness of masking, of any kind, in any context. There is no time. This is an emergency, and even a moment's delay in widely implementing what they'd already decided was important would be gravely perilous. Plus it would be unethical, since they already knew they worked. Crafting face-socks becomes a cottage industry, and some otherwise smart people construct entire identities out of it.

Hold on, we are told, the virus might also spread via aerosols. Apparently aerosols are small. Everybody talks about aerosols, including many vocal face-sock advocates, despite the fact this kind of rubbishes the mechanism of the face-socks. But we are told to keep it up anyway. I had already started purchasing KN95s.

Ok, maybe you should wear a surgical mask. No, wait, use a KN95. No, wait, get a full respirator. Wear them outside. Jog in them. No, not like that. Can we swim in them? Don't touch them. Dry them in the sun. No, wait, you need to wear them properly…

Wait. What!? Conduct research? Implementation science? WHAT KIND OF MONSTER ARE YOU!? We already know they work.

3 years now and counting: Masking becomes, and remains, an incredibly contentious topic. To say the least. And pretty much nobody is wearing one. Great work.

I can’t get past the feeling that we really could have generated some useful evidence in those first months that would have paid dividends over the next 3 years and beyond. Could we have convinced states and markets to mass produce and widely distribute high quality masks? Wouldn't that have been a good thing?

What The Hubris misunderstands (or ignores) is that, as a scientist, your opinion about an intervention, even an expert opinion, doesn't really matter. For example, in medicine, doctors form their own opinions about the usefulness of different treatments. They might draw on their own experiences, expert peer opinion, medical research, and official guidance. But at the end of the day, two smart, educated medics can disagree on the best course of action, and nobody bats an eye. However, just because individual doctors feel certain that they already know what works best, we accept that this shouldn’t stop them from conducting clinical trials to generate convincing evidence about that intervention. What matters is that there is uncertainty among the community of decision makers. We call this equipoise.

Now bring this back to the pandemic. It doesn't really matter if you as a scientist already know the intervention works. What matters is that there is uncertainty among decision makers, and this uncertainty during the pandemic was constantly on display - for individuals, for governments, for everyone. That uncertainty is then an opportunity for you as the scientist to help through empirical research. Maybe that meant running a study where nobody wears the face-socks you were certain worked (at least until you were certain they didn't). But lots of people were already not wearing the face sock. Clearly the information they had available to them hadn't convinced them, and simply saying "trust us" isn't usually an effective strategy for changing that (partly because of The Clueless and The Hopeless). And maybe it's not going to be easy, and maybe it's going to cost money (gasp!). But we have to try. We have to advocate for the importance of science for improved decision-making, and the investments in research infrastructure to do it. Given how challenging this advocacy already is, it’s heartbreaking to see scientists undercut it by suggesting we already know the answers when we don’t.

Selected reading

Causation and Causal Inference in Epidemiology. Rothman and Greenland

Statistics and Causal Inference. Paul W. Holland

Research waste is still a scandal—an essay by Paul Glasziou and Iain Chalmers

Research: increasing value, reducing waste

Is the concept of clinical equipoise still relevant to research?

I agree strongly with much of this, but as someone who does (or tries to do) modeling, I don't know how easily studying system-level phenomena could adhere to some of these expectations. Some would say it can't: the whole endeavor is hopeless, not science, punishable by the death penalty, etc. The questions in this domain are difficult to pose and harder to answer. All modeling is a sort of fantasy (but frankly, I'm not sure it's that much more fantastical than regression). Sometimes coarse, qualitative insights are all you can get, and you don't know that they're leading you in the right direction. That can still be progress.

Anyway, I'm on the bus, and maybe in the bottom part of the triangle...